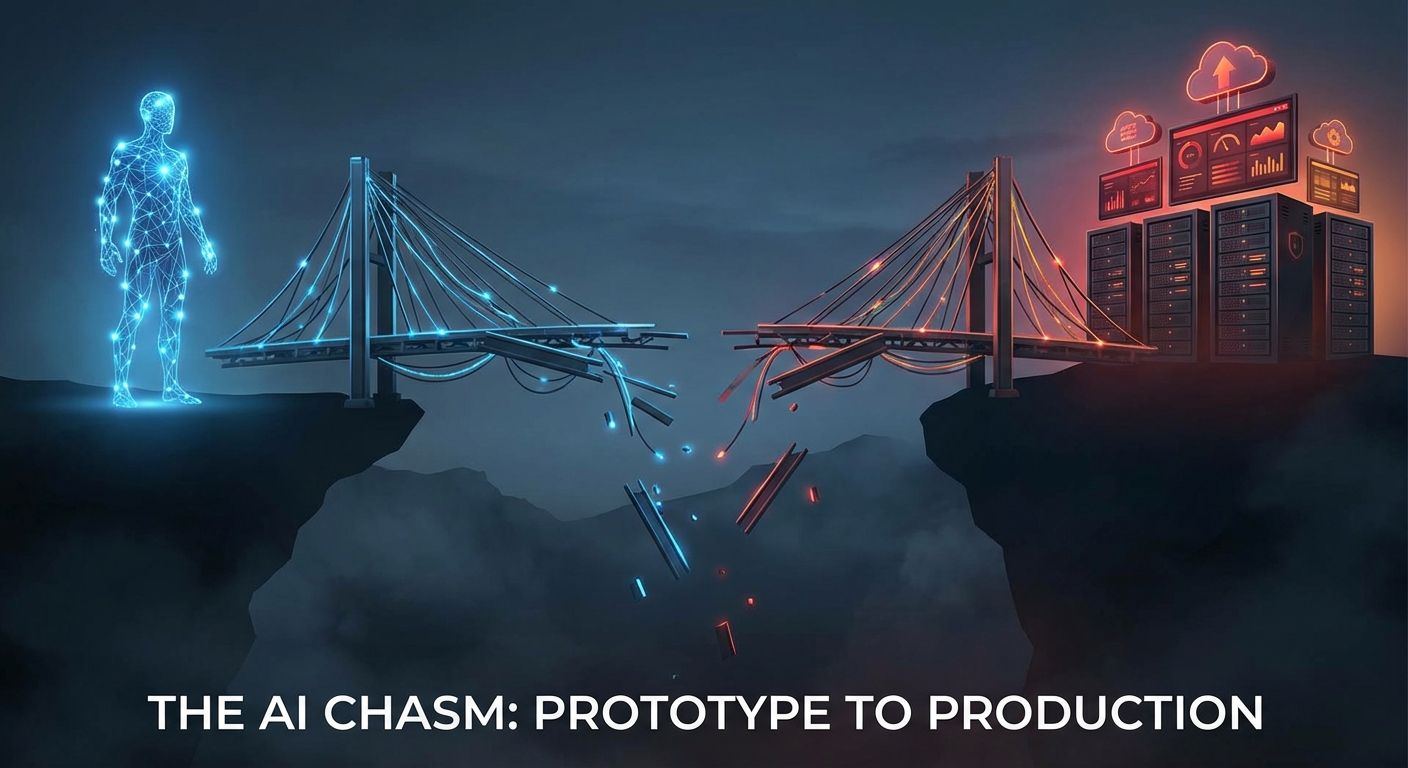

The Numbers Are Brutal

DigitalOcean's March 2026 report found that 67% of organizations report measurable gains from AI agent pilots. But only 10% successfully scale those pilots to production. The gap between working demo and production system is where most AI agent projects die.

Separate analysis puts the broader failure rate at 88% - meaning only 12% of enterprise AI agent initiatives reach production deployment. The average cost of a failed project is $340,000 in direct engineering spend, not counting opportunity cost or organizational credibility damage.

These are not random failures. They follow seven predictable patterns.

Pattern 1 - Starting with Technology Instead of Workflow

The most common failure starts with a technology decision rather than a workflow decision. Teams select an agent framework, build a demo that impresses stakeholders, then discover that the demo workflow does not map to any actual business process.

Production agents need to fit into existing operational rhythms. They need defined inputs that come from real systems, outputs that feed into real downstream processes, and error handling that matches how the organization actually responds to problems.

The fix is simple but requires discipline. Start by mapping one specific multi-step workflow end to end. Document every decision point, system interaction, and exception path. Only then select the technology that fits.

Pattern 2 - No Governance from Day One

Agent systems that reach the demo stage without governance controls almost never get them added later. The architecture decisions that make demos fast - broad tool access, no approval gates, minimal logging - become technical debt that blocks production deployment.

Security teams reject agent systems that cannot demonstrate audit trails. Compliance teams block deployments without defined permission boundaries. Operations teams refuse to support systems without monitoring and alerting.

Governance is not a feature you add at the end. It is an architectural decision that shapes every component from the beginning. Define permission boundaries, approval gates, and audit logging before writing the first line of agent code.

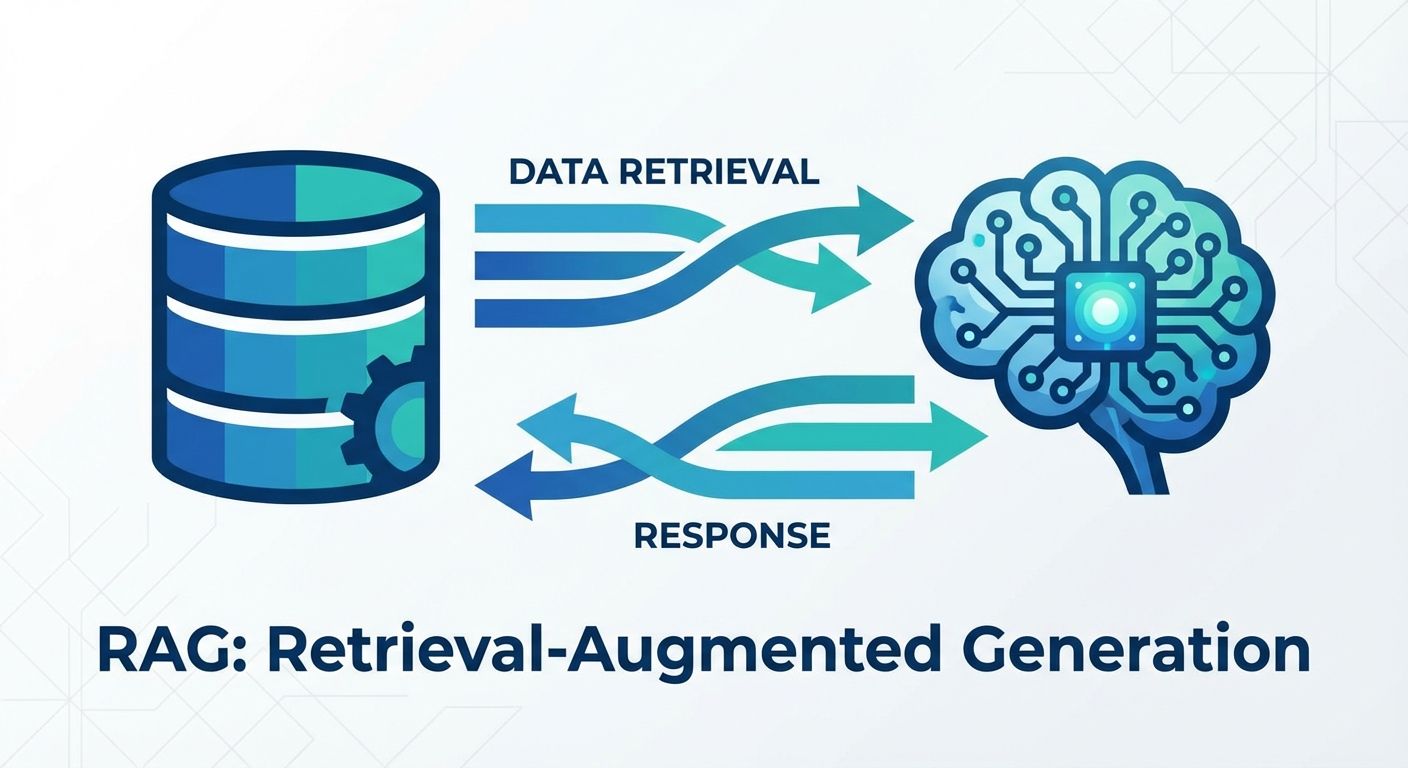

Pattern 3 - Underestimating Integration Complexity

Enterprise systems were not designed to be called by AI agents. They have rate limits, authentication quirks, data format inconsistencies, and undocumented behaviors that only surface under production load.

Teams estimate integration work based on API documentation, which describes ideal behavior. Production integration requires handling the gap between documented and actual behavior - retry logic for intermittent failures, data transformation for format mismatches, graceful degradation when systems are unavailable.

MCP reduces this complexity significantly by standardizing the tool interface layer. But even with MCP, the business logic behind each integration - what data to request, how to interpret responses, what constitutes an error versus an edge case - requires domain expertise that cannot be abstracted away.

Pattern 4 - Building Multi-Agent Before Single-Agent Works

Multi-agent architectures are intellectually appealing. Specialized agents collaborating on complex tasks maps cleanly to how organizations think about division of labor. But multi-agent coordination introduces an entire category of failure modes - deadlocks, circular delegations, conflicting actions, inconsistent state - that single-agent systems avoid entirely.

Every production multi-agent system we have seen started as a single agent that handled the full workflow. Only after the single-agent version was stable in production did the team decompose it into specialized sub-agents.

Start with one agent handling one workflow. Make it reliable. Monitor it. Then decompose when you have data about which subtasks benefit from specialization.

Pattern 5 - No Observability Infrastructure

Agent systems are fundamentally different from traditional software in one critical way - their behavior is non-deterministic. The same input can produce different reasoning paths, different tool call sequences, and different outputs. Without detailed observability, debugging production issues becomes impossible.

Production agent systems require logging of every reasoning step, every tool call with inputs and outputs, every decision point and the rationale behind the choice, latency per step, cost per execution, and success/failure rates by workflow segment.

Teams that treat observability as optional during development find themselves unable to diagnose production issues. The investment in logging and monitoring infrastructure pays for itself within the first week of production operation.

Pattern 6 - Ignoring the Cost Model

Agent systems that make multiple LLM calls, query external APIs, and process large context windows can be expensive to run at scale. A workflow that costs $0.50 per execution during testing costs $50,000 per month at 100,000 monthly executions.

Production cost modeling requires understanding token consumption per workflow, API call costs for external integrations, infrastructure costs for hosting and monitoring, and the scaling curve as usage grows.

The infrastructure cost multiplier from pilot to production is typically 5-10x. Teams that do not model this before committing to production timelines face uncomfortable budget conversations when scaling begins.

Pattern 7 - No Human Feedback Loop

Agent systems improve through iteration, and iteration requires structured feedback from the humans who interact with agent outputs. Without a feedback mechanism, agents continue making the same mistakes indefinitely.

Production agent systems need channels for end users to flag incorrect outputs, processes for reviewing and categorizing feedback, mechanisms for incorporating corrections into agent behavior, and metrics that track improvement over time.

The organizations that succeed with agent deployment treat it as an ongoing operational function, not a one-time deployment. They staff it, measure it, and improve it continuously.

What the Successful 12% Do Differently

The organizations that reach production share common practices.

They start with workflow mapping, not technology selection. They build governance into the architecture from day one. They invest in observability before they need it. They start with single-agent systems and decompose only when data supports it. They model costs at production scale before committing to timelines. And they build feedback loops that drive continuous improvement.

None of these practices are technically difficult. They require organizational discipline - the willingness to slow down during development to avoid the 88% failure rate that comes from rushing to demo.

The technology for production AI agents exists and works. The gap is in how organizations approach the implementation, not in what the technology can do.