The Hallucination Problem

AI models generate confident, well-structured answers to questions they know nothing about. In a consumer context, this is an inconvenience. In an enterprise context, it is a liability.

When an AI agent tells a field operator the wrong torque specification for a pressure vessel, or provides incorrect compliance guidance to an auditor, the consequences are measured in safety incidents and regulatory penalties. Enterprise AI cannot afford to guess.

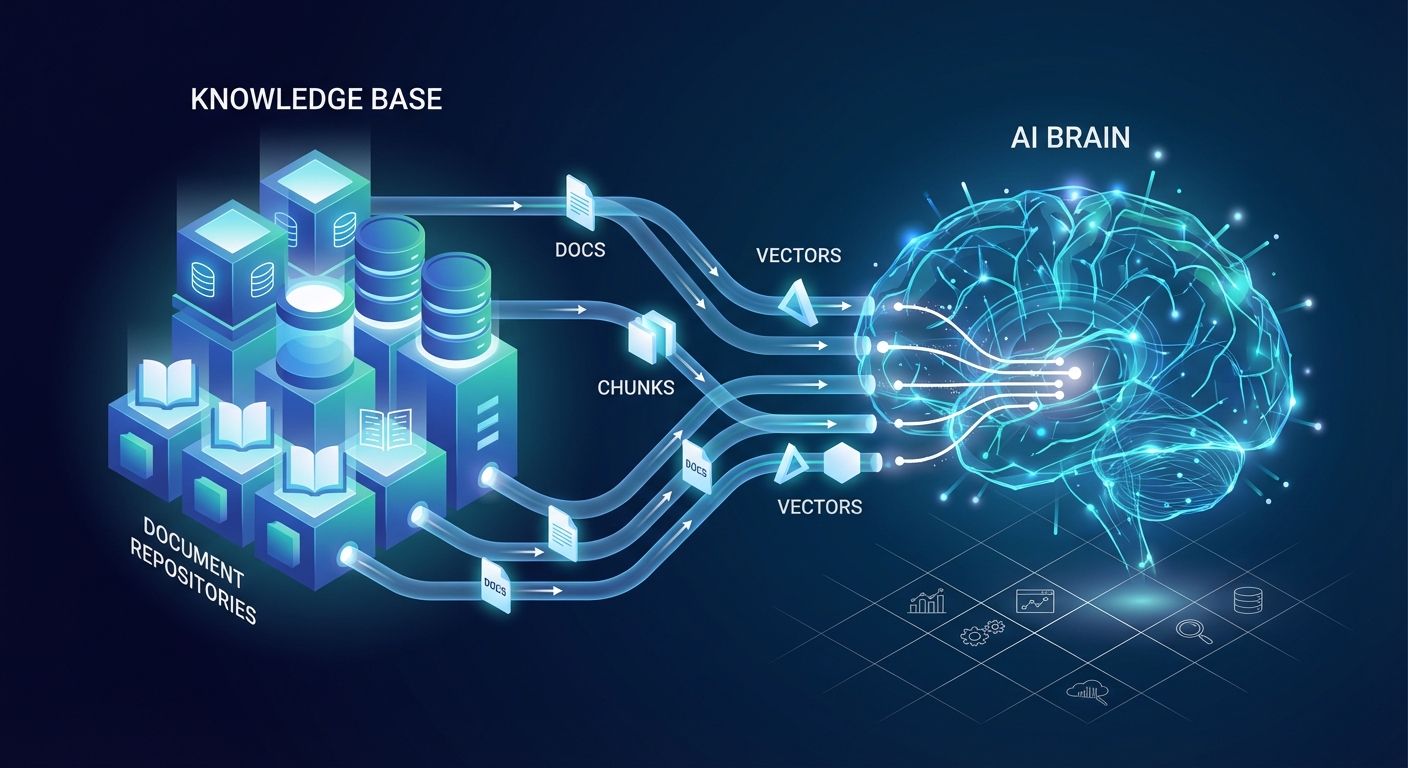

Retrieval-augmented generation solves this by changing how agents access knowledge. Instead of relying on training data that may be outdated or irrelevant to your operations, RAG forces agents to retrieve relevant documents from your knowledge base before generating a response. The agent answers from your data, not from its training.