Your CEO read about Llama being "free" and wants to know why you're paying for GPT-5. Your security team wants everything on-premise. Your developers want to use Claude. Here's what this decision actually means for your organization.

Understanding Open vs Closed Source AI

Open Source AI means the model's weights, architecture, and often training code are freely available. You can download models like Meta's Llama, Mistral, or DeepSeek, modify them, run them on your own servers, and even fine-tune them on your proprietary data. You have complete control - but also complete responsibility for infrastructure, maintenance, and performance.

Closed Source AI refers to proprietary models like OpenAI's GPT, Anthropic's Claude, or Google's Gemini that are accessed through APIs or web interfaces. The companies control the models, handle all infrastructure, and provide regular updates. You pay for usage but get enterprise support, guaranteed uptime, and no operational overhead.

Think of it like running your own email server versus using Gmail - one gives you total control, the other gives you convenience and reliability.

The "Free" Open Source Myth

Llama 3.3, DeepSeek V3, and other open source models are free like a puppy is free. The model weights cost nothing. Running them costs everything.

To run Llama 3.3 70B effectively, you need at least 140GB of VRAM for full precision or 70GB with INT8 quantization. That's either 2x NVIDIA A100 80GB GPUs at $3,700/month in cloud costs, or a single H100 at even higher rates. One fintech calculated their "free" Llama deployment would cost $425K in year one when including engineers, infrastructure, and maintenance.

Meanwhile, Claude Team costs $25/user/month (annual) or $30/user/month (monthly billing). ChatGPT Team costs $25/user/month (annual) or $30/user/month (monthly billing). GPT-5 via Azure OpenAI runs on consumption-based pricing. No infrastructure, no maintenance, just API calls.

The math only works for open source if you're processing over 50 million tokens monthly or have extreme compliance requirements. Below that threshold, you're paying more for control you might not need.

What You Actually Control (And Don't)

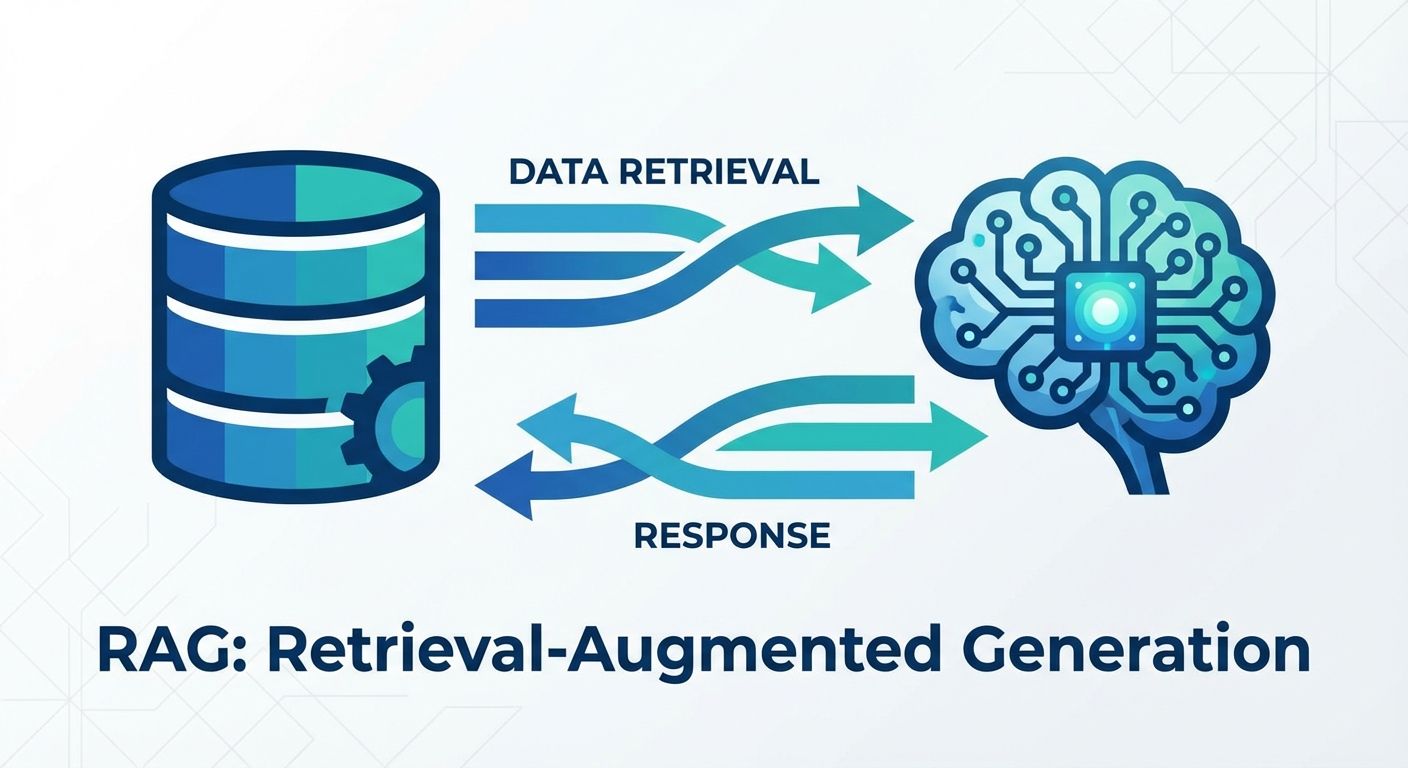

Open Source Control: You can modify the model, fine-tune on your data, and run it anywhere. A healthcare company fine-tuned Llama 3.3 on medical terminology and got 35% better accuracy on their specific use cases. You own the deployment, the data never leaves your servers, and you're not dependent on any vendor.

But you also own every problem. When the model hallucinates, that's your problem. When it needs updating, that's your engineering time. When it goes down at 3 AM, that's your incident response. And with the new mixture-of-experts architectures, debugging has become significantly more complex.

Closed Source Reality: You get what OpenAI, Anthropic, or Google delivers. You can't modify the base model, though Azure OpenAI now offers fine-tuning for GPT-4 and Claude's 200K context window allows extensive customization through prompting. Your data goes through their servers (though enterprise agreements include specific data protection clauses).

But when GPT-5 launched in August 2025, enterprise customers got the improvements automatically. When infrastructure fails, it's their 99.9% SLA commitment. When new capabilities launch, you get them immediately without redeployment.

The Privacy Question Everyone Asks Wrong

"We need open source for data privacy" is what every CISO says initially. But privacy isn't about where the model runs - it's about data governance and contractual guarantees.

OpenAI's enterprise agreement includes zero data retention for training and SOC2 Type II compliance. Your prompts don't train their models. Azure OpenAI goes further with Data Zones - letting you control exactly which geographic regions process your data while still accessing the latest models. Microsoft explicitly states data is encrypted at rest (AES-256) and in transit (TLS 1.2+), with 30-day retention only for abuse monitoring (which you can opt out of).

Meanwhile, that open source model running in your data center might be logging everything to debug errors. Your contractors might be copying outputs to their laptops. Privacy comes from processes and contracts, not just premises.

One manufacturing client learned this the hard way: "We spent eight months deploying Llama 3.3 for privacy, then realized our bigger risk was employees using personal ChatGPT accounts with company data."

Performance Reality Check

The benchmarks tell an interesting story. OpenAI's new gpt-oss-120b achieves near-parity with GPT-5 mini on reasoning tasks. Llama 3.3 70B matches Claude 3.5 Sonnet on many benchmarks while using fewer resources. For most business use cases - document analysis, code generation, customer support - performance differences are under 10%.

The real differences appear in specialized capabilities. GPT-5's 272K token context window handles entire codebases. Claude's constitutional AI approach produces more reliable outputs for regulated industries. Llama 3.3's efficiency makes it ideal for high-volume batch processing.

A logistics company tested all major models on route optimization. Performance variance was 7%. They chose based on total cost of ownership, not benchmark scores.

The Real Decision Framework

Choose open source when:

- Processing over 50M tokens/month consistently

- Regulatory requirements mandate on-premise deployment

- You need model customizations worth the engineering investment

- You have ML engineers who want ownership

- Batch processing workloads can leverage spot instances (40-60% savings)

Choose closed source when:

- You want to start using AI tomorrow

- Total usage under 10M tokens/month

- You need consistent updates and new capabilities

- Your team should focus on business logic, not infrastructure

- You need enterprise SLAs and support

The hybrid approach that actually works: Use closed source APIs for prototyping and variable workloads. Deploy open source for high-volume, specialized operations. One retailer uses Claude for customer service but runs fine-tuned Llama 3.3 for product descriptions - processing 12M monthly at 70% lower cost than API calls would be.

The Hidden Costs Nobody Mentions

Open Source Hidden Costs:

- GPU availability issues (H100s have 3-6 month lead times)

- Quantization reduces costs but degrades coding capabilities by up to 30%

- Engineering time: expect 2-3 FTEs for production deployment

- Monitoring and observability tools add 15-20% to infrastructure costs

- Model versioning and rollback systems

Closed Source Hidden Costs:

- Rate limits during peak times (Claude introduced weekly caps in August 2025)

- Vendor lock-in makes switching expensive

- Limited customization compared to fine-tuned models

- Data transfer costs for large-scale operations

- Premium pricing for advanced features (GPT-5 costs 3x more than GPT-4)

What Changed in 2025

The landscape shifted dramatically this year. OpenAI released gpt-oss models under Apache 2.0 license - their first open language models since GPT-2. These run on consumer hardware (gpt-oss-20b needs just 16GB VRAM) while matching proprietary model performance.

Anthropic introduced tiered rate limits after "a small handful of users" overwhelmed Claude Code. Max subscribers ($200/month) now get 240-480 hours of Sonnet 4 weekly for coding tasks.

Microsoft's Azure OpenAI Data Zones now let you specify exact geographic boundaries for data processing while maintaining access to all models - addressing the main enterprise concern about cloud AI.

Meta's Llama 3.3 70B delivers 405B-level performance with 85% fewer resources, fundamentally changing the economics of self-hosting.

The Decision Most Make

After evaluation, here's what enterprises actually do:

65% choose closed source initially, using ChatGPT Team or Claude Team for immediate productivity gains. Average cost: $25-30/user/month with predictable budgeting.

25% adopt hybrid approaches within 12 months, keeping closed source for general use while deploying open source for specific high-volume tasks.

10% go fully open source, typically those with existing ML infrastructure and regulatory requirements.

Start with closed source APIs to understand your actual usage patterns. Track token consumption, peak loads, and use cases. If you're consistently exceeding 50M tokens monthly with predictable workloads, evaluate open source. Most discover they never reach that threshold.

The question isn't "open or closed?" It's "build infrastructure or buy capability?" For 90% of enterprises, buying the capability lets you focus on what actually differentiates your business - which isn't running AI infrastructure.