Enterprise teams need AI solutions that work beyond proof-of-concepts. HuggingFace reached $130.1M revenue in 2024 serving companies like Amazon, Nvidia, and Microsoft with production-ready AI infrastructure.

This guide covers what HuggingFace is, how it works technically, and why enterprises choose it for deploying AI at scale.

What HuggingFace Is

HuggingFace is the GitHub of machine learning. The platform hosts over 1 million AI models, 190,000 datasets, and 55,000 demo applications that developers and enterprises use to build AI systems.

The company operates three main products:

Model Hub - A repository of pre-trained AI models covering text, vision, audio, and multimodal tasks. Companies download these models instead of training from scratch, saving months of development time.

Transformers Library - Open-source Python library that provides standardized APIs for 40+ model architectures including BERT, GPT, and T5. This library processes 25 million monthly downloads from developers worldwide.

Enterprise Solutions - Managed infrastructure, private model hosting, and dedicated support for companies deploying AI in production environments.

How HuggingFace Works Technically

The platform centers around the Transformers library, which acts as the model-definition framework for state-of-the-art machine learning. Here's the technical architecture:

Model Architecture

- Configuration - Defines model parameters like hidden layers, attention heads, and vocabulary size

- Model weights - Stored in safetensors format for security and efficiency

- Preprocessor - Handles data formatting and tokenization

Every model follows a three-component structure:

This standardization means models work consistently across PyTorch, TensorFlow, and JAX frameworks. Enterprises can switch between training libraries or inference engines without rewriting model definitions.

The Pipeline System

HuggingFace's Pipeline class provides high-level inference for common tasks:

from transformers import pipeline

classifier = pipeline("sentiment-analysis")

result = classifier("This quarterly report shows strong growth")

# Output: [{'label': 'POSITIVE', 'score': 0.9998}]Pipelines support text classification, question answering, image recognition, and 30+ other tasks out-of-the-box.

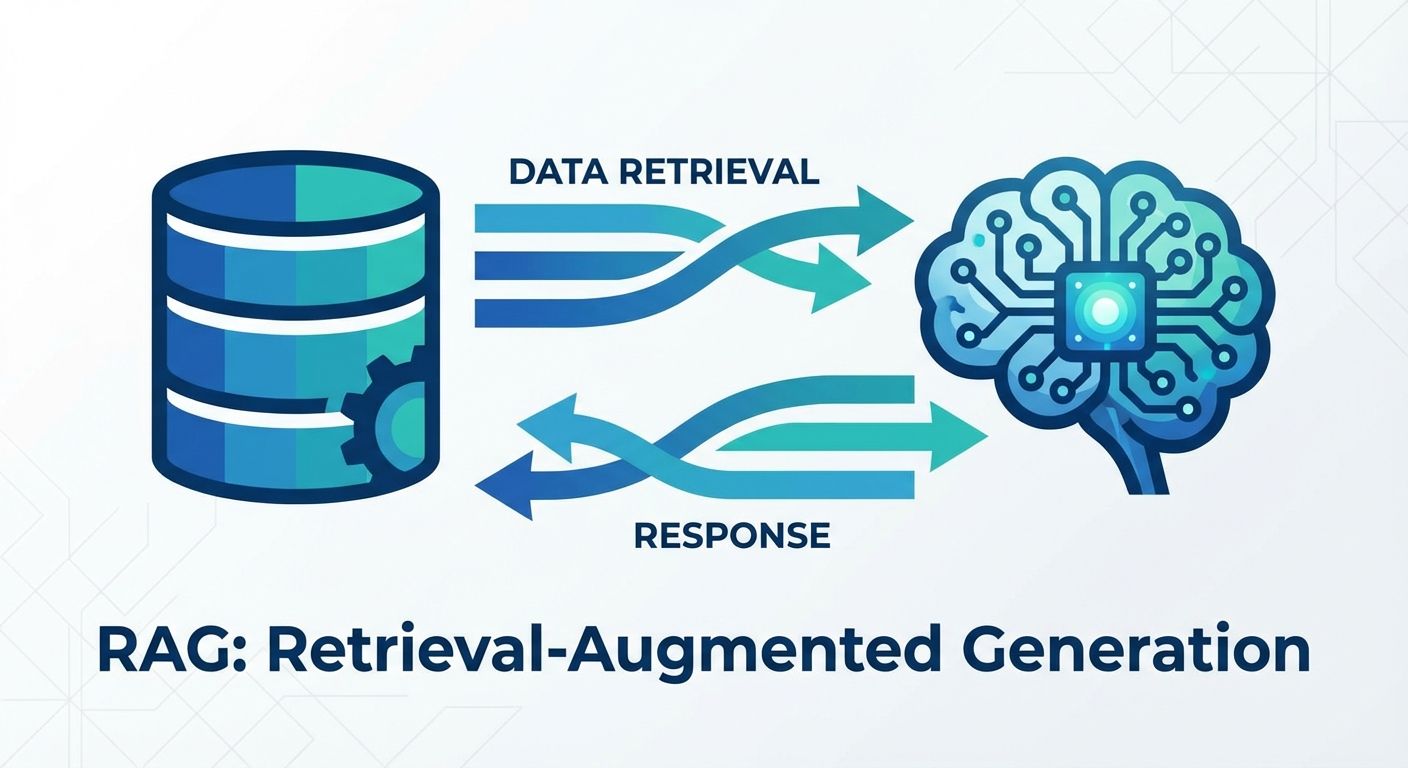

Inference Infrastructure

- Inference API - RESTful endpoints with automatic scaling

- Inference Endpoints - Dedicated infrastructure for enterprise workloads

- On-premises deployment - Private cloud options for regulated industries

For production deployments, HuggingFace offers:

The infrastructure handles model loading, GPU optimization, and traffic routing automatically.

How HuggingFace Came to Be

HuggingFace began as a teenager chatbot app in 2016. French entrepreneurs Clément Delangue, Julien Chaumond, and Thomas Wolf founded the company in New York, naming it after the 🤗 hugging face emoji.

The original product was an "AI best friend forever" that provided emotional support through conversation. The founders built sophisticated natural language processing models to power the chatbot's responses.

The Pivot That Changed AI

In 2018, the founders made a crucial decision. The reaction was so enthusiastic when they open-sourced their NLP models that it redefined their mission "organically and progressively."

They released their first open-source library, which became the Transformers framework. The developer community's response was immediate - thousands of AI researchers and companies began using their standardized model implementations.

This pivot transformed HuggingFace from a consumer chatbot to the infrastructure layer of the AI industry. By 2025, over 5 million AI builders use the platform to develop and deploy machine learning applications.

HuggingFace's Impact on Enterprise AI

The platform addresses a fundamental enterprise problem - AI model deployment complexity. Before HuggingFace, companies needed separate teams for research, engineering, and infrastructure to deploy a single AI model.

Cost Reduction Through Standardization

Enterprises save significant development costs by using pre-trained models instead of training from scratch. Cost efficiency is a major driver of adoption, as developing AI models requires investment that most organizations cannot justify.

Companies like Intel, Qualcomm, Pfizer, and Bloomberg use HuggingFace's enterprise solutions to deploy AI without building internal ML infrastructure teams.

Open Source Philosophy in Practice

HuggingFace's open-source approach creates transparency that enterprises require for production systems. Companies can inspect model architectures, understand training data, and customize implementations for specific use cases.

This transparency contrasts with proprietary AI services where companies cannot audit or modify core functionality. Open models reduce vendor lock-in, giving organizations control over their technology stack.

Technical Deep Dive - Enterprise Features

Transformers Library Integration

- Fine-tune models on proprietary data

- Deploy across cloud or on-premises infrastructure

- Scale inference from thousands to millions of requests

- Monitor model performance and data drift

The Transformers library integrates with enterprise ML workflows through standardized interfaces. Companies can:

The library supports distributed training across multiple GPUs and nodes, enabling enterprises to handle large-scale AI workloads efficiently.

Enterprise Security and Compliance

- Single Sign-On (SSO) - Integration with corporate identity systems

- Access Controls - Role-based permissions for models and datasets

- Audit Logs - Comprehensive tracking of model usage and modifications

- Regional Data Storage - Compliance with data residency requirements

HuggingFace enterprise solutions include:

These features address regulatory requirements in healthcare, finance, and government sectors.

Production Deployment Options

Enterprises deploy HuggingFace models through multiple paths:

Inference Endpoints - Managed infrastructure with automatic scaling, load balancing, and monitoring. Companies pay based on usage without managing servers.

On-premises Deployment - Private installations for organizations with strict data governance requirements. HuggingFace provides the same model repository and management tools within customer infrastructure.

Hybrid Solutions - Combination of cloud-hosted models for development and on-premises deployment for production workloads.

Business Model and Enterprise Value

HuggingFace generates revenue primarily through enterprise subscriptions and managed services. The company reached $130.1M revenue in 2024, with most income from enterprise customers.

Pricing Structure

- Pro Plan - $9/month for individual developers with private repositories and early access features

- Enterprise Plan - $20/user/month with SSO, audit logs, and dedicated support

- Custom Solutions - Usage-based pricing for high-volume deployments and specialized requirements

Enterprise Customer Growth

The platform serves over 1,000 paying customers including major technology companies and Fortune 500 enterprises. Customer growth accelerated as companies moved from AI experimentation to production deployment.

Enterprise customers receive guidance from HuggingFace's ML experts, dedicated support channels, and customized deployment architecture.

Current Market Position and 2025 Outlook

HuggingFace achieved a $4.5 billion valuation with backing from Google, Amazon, Nvidia, IBM, and Salesforce. This investor lineup reflects the platform's strategic importance to the AI ecosystem.

The company processes 25 million monthly website visits and hosts models that power applications used by millions of end users daily.

2025 Growth Projections

HuggingFace expects 15 million AI builders to use the platform by 2025. Enterprise adoption accelerates as companies move beyond pilot programs to production AI systems.

Recent model releases include GPT OSS from OpenAI and ModernBERT variants, demonstrating continued innovation in the open-source AI ecosystem.

Implementation Considerations for Enterprises

Organizations evaluating HuggingFace should consider these factors:

Model Selection - The platform hosts thousands of models with varying performance, licensing, and computational requirements. Enterprises need clear criteria for model evaluation and selection.

Infrastructure Planning - Production AI systems require GPU compute, storage, and networking resources. HuggingFace provides guidance on infrastructure sizing and optimization.

Data Integration - Most enterprises need to fine-tune models on proprietary data. This requires secure data pipelines and model versioning systems.

Governance and Compliance - AI systems require monitoring for bias, performance degradation, and regulatory compliance. HuggingFace provides tools for model governance and audit trails.

HuggingFace transforms how enterprises approach AI implementation. The platform reduces development time, provides production-ready infrastructure, and enables customization without vendor lock-in.

Companies serious about AI deployment find HuggingFace's combination of open-source transparency and enterprise support addresses both technical and business requirements for scaling AI applications.

Making the right AI platform decisions requires balancing technical capabilities with operational realities. Organizations that combine industry knowledge with proven platforms see faster, more sustainable results in their AI initiatives.